The AI Buildout Has a Problem: Over Half of Planned Data Centers Are Stalled

The artificial intelligence boom has been sold as a story of unstoppable expansion. Billions in chips, billions in data centers, and a race among the world’s biggest companies to build the infrastructure that will define the next era of computing. But one number cuts through that narrative: over half of planned data centers have reportedly been delayed or canceled.

That matters because the AI trade is no longer just about software ambition. It is about physical construction, energy access, financing, and whether the industry can turn extraordinary spending into durable returns. Right now, the answer is far less certain than the market’s enthusiasm suggests.

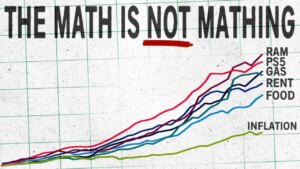

The scale of spending alone is breathtaking. According to the outline, companies spent roughly $400 billion on AI infrastructure in 2025, and that figure excludes major non-public players and categories such as staffing, security, energy, and acquisitions. This is not a niche technology cycle. It is an industrial-scale capital surge, one that is expected to break records again in 2026.

Yet for all that money, the industry’s core business model remains surprisingly unsettled. Four years after ChatGPT jolted Silicon Valley into action, the outline argues that no AI company has yet achieved sustained profitability from AI services themselves. The clear winners so far have been hardware suppliers, especially Nvidia and the upstream chip ecosystem. In other words, the companies selling the tools are making money. The companies trying to turn those tools into lasting software profits largely are not.

That distinction is central to understanding why delayed and canceled data center projects matter so much. Nvidia has become the defining corporate winner of the AI era because it sits at the most profitable part of the chain. If everyone wants to build, Nvidia gets paid first. But if the actual construction of that AI future begins to slow, stall, or spread out, then the industry’s assumptions about demand become much shakier.

This is where the buildout appears to be running into trouble. The outline notes that although 21.5 gigawatts of data center capacity were announced before 2027, only about 6.3 gigawatts are under active construction. Many projects remain stuck in early stages, waiting on foundations, fit-outs, utility approvals, or local acceptance. Some are facing community pushback. Others are blocked by electrical infrastructure that is simply not ready. The result is a widening gap between what has been announced and what is actually getting built.

That gap creates a serious market question. If AI companies and cloud providers are buying hardware at an aggressive pace, but a large share of the physical sites needed to house that hardware are not moving forward, what happens next? One answer is that inventory begins to pile up faster than deployment. The outline points to that risk directly, noting that Nvidia’s inventory has risen sharply, doubling from the previous year and quadrupling from 2024 levels. That does not prove an immediate glut, but it does suggest the possibility that the industry is buying ahead of reality.

The bottleneck is not just land or permits. It is power. AI data centers require enormous electricity loads, and electrical infrastructure has become one of the hardest parts of the whole system to scale. Transformers, substations, interconnection equipment, and supporting components have all become more expensive and more constrained. Some are imported from countries now facing tariffs or supply disruptions. Even where a building can be constructed, it may still sit idle waiting for enough power to run the machines inside.

That problem becomes even more serious when energy prices rise. If electricity and fuel costs continue climbing, older hardware may become uneconomic faster than expected. The outline argues that GPUs may be depreciated over about six years for accounting purposes, even though their practical life may be closer to three years because of rapid model improvements and growing energy demands. If that is true, some of the profits being celebrated today may look less robust under a harsher economic reality.

This is one of the least appreciated risks in the AI boom. The buildout is not just expensive upfront. It may also age quickly. Every time a new model generation raises performance expectations, existing hardware becomes less compelling. And if energy costs make older systems expensive to operate, the useful life shrinks further. That creates a cycle in which infrastructure must be replaced sooner, financed more aggressively, and justified under tougher economics than investors may currently assume.

Another warning sign is the industry’s tendency to overorder when it fears future shortages. Companies buy early because they worry chips, transformers, cooling systems, or power access will become harder to secure later. But that behavior can create a classic bullwhip effect. Everyone orders more than they need, suppliers ramp production to meet that perceived demand, and then the market discovers that actual deployment is lagging. In a sector moving this fast, that kind of mismatch can show up suddenly and painfully.

The financial risks do not stop with chipmakers. Large private credit firms and major capital providers have poured money into AI-linked infrastructure projects, often on the assumption that demand growth will remain overwhelming. But if delays mount, power remains constrained, and downstream AI products stay weakly profitable, then those investments begin to look less like inevitable winners and more like highly leveraged bets on a still-unfinished market.

None of this means AI demand disappears. The technology is real, adoption is real, and the infrastructure race may continue longer than skeptics expect. Markets can stay enthusiastic well past the point where underlying strain becomes obvious. But the new title gets at something important: a boom this large becomes much harder to defend when more than half of its planned physical backbone is being delayed or scrapped.

That is what makes this moment so revealing. The AI industry is spending like a mature utility, hyping like a startup, and profiting mainly at the hardware layer. Meanwhile, the actual construction pipeline appears much less certain than the public narrative implies. The risk is not that AI vanishes. It is that expectations, purchases, and valuations have all run ahead of what the real-world buildout can support.

For now, Nvidia remains the dominant winner, because the industry is still ordering the machinery. But if delayed and canceled data centers continue to pile up, the market will eventually have to confront a harder question: how much of this boom is based on actual deployment, and how much is based on companies buying into a future that is arriving more slowly than expected?

That is when AI stops being just a technology story and starts looking like what it increasingly is: a capital cycle.

All writings are for educational and entertainment purposes only and does not provide investment or financial advice of any kind.